|

11/29/2023 0 Comments Markov chain model

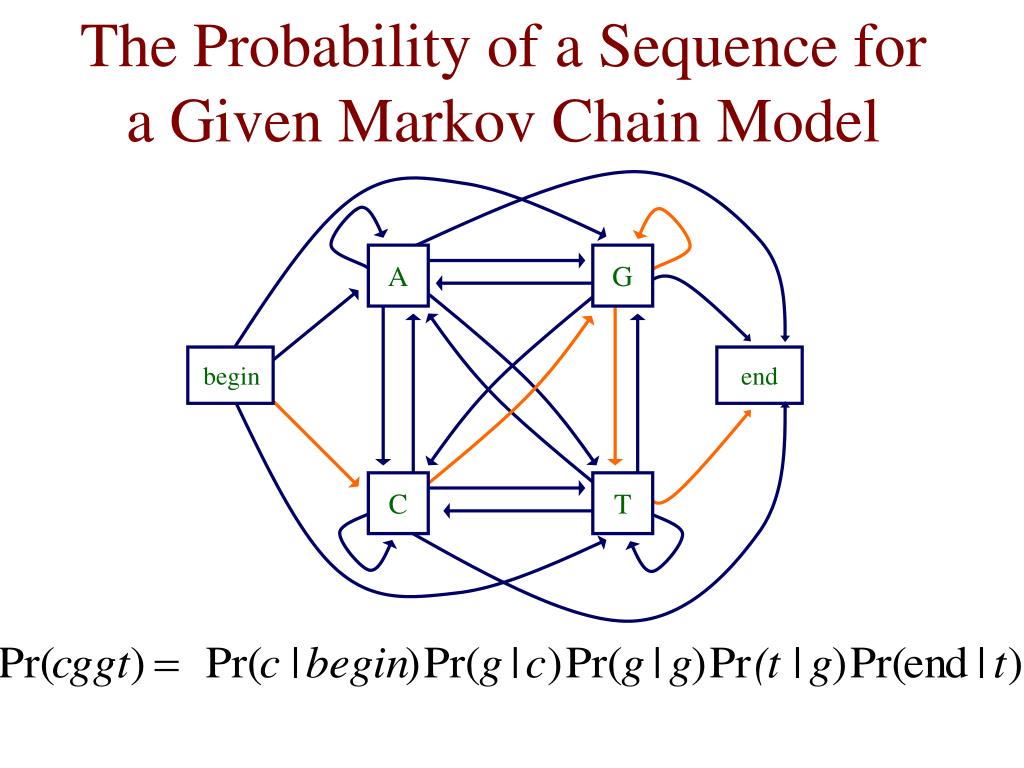

I don't understand what $y$ represents in the phrase, "If a day's labor has been expended on a machine not yet repaired". Markov chains, named after Andrey Markov, are mathematical systems that hop from one state (a situation or set of values) to another. As per Wikipedia, ‘A Markov chain or Markov process is a stochastic model which describes a sequence of possible events where the probability of each event depends only on the state attained in the previous event. Form a markov chain by taking as states the pairs $(x, y)$ where $x$ is the number of machines in operating condition at the end of a day and $y$ is $1$ if a day's labor has been expended on a machine not yet repaired and $0$ otherwise. So in a Markov chain, the future depends only upon the present, NOT upon the past. To use the spatial Markov chain model for quantitative urban land-use predictions, it was necessary to ascertain the transfer probability at equivalent time steps. There is a single repair facility which takes $2$ days to restore a computer to normal. A Markov chain is a process that consists of a finite number of states with the Markovian property and some transition probabilities pij, where pij is the. The understanding of the above two applications along with the mathematical concept explained can be leveraged to understand any kind of Markov process. Markov chains are a type of mathematical system that undergoes transitions from one state to another according to certain probabilistic rules.

A computer may break down on any given day with probability $p$. Markov Chain is a very powerful and effective technique to model a discrete-time and space stochastic process.

An airline reservation system has two computers only one of which is in operation at any given time.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed